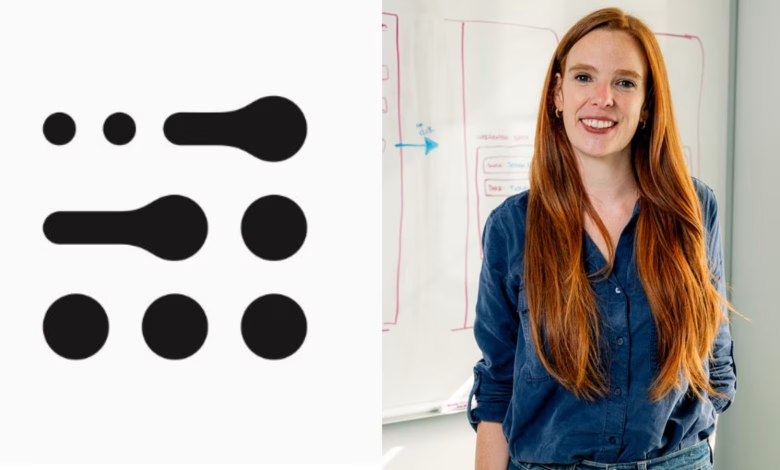

‘Simply making models bigger won’t get us far, AI must adapt like humans’: Adaption Labs CEO Sara Hooker | Technology News

Much of the news coming out of the AI Impact Summit 2026 has been focused on language models, from small, sector-specific systems to larger foundational models, and the compute needed to train, deploy, and scale these models.

However, a growing group of researchers led by AI pioneers such as Richard Sutton and Yann LeCun argue that the scaling laws that once led to sharp gains in model performance are broken, and that simply throwing more GPUs (Graphics Processing Units) at the models will not lead to more accurate, higher-quality responses.

Sara Hooker, the CEO and co-founder of Adaption Labs, is among the key AI researchers invited to the Summit, who is urging the industry to look beyond LLMs. Hooker has a strong track record of working at the frontier of AI research, from her days at Google Brain to heading up a research lab under enterprise AI startup Cohere.

Named among the 100 Most Influential People in AI in 2024 by Time magazine, she is now focused on building AI systems that can learn continuously from real-world interactions and adapt-on-the-fly with greater efficiency. In an interview with indianexpress.com, Hooker speaks in-depth about how the era of simply adding more GPUs to larger models has reached a point of diminishing returns and why the future of AI lies in adaptive learning.

Q: Could you offer a quick rundown of the history of scaling laws and how AI models have evolved alongside them?

Hooker: Up until 2012, there weren’t really any scaling laws, and for three decades deep neural networks didn’t work. 2012 changed everything because it was this lucky collision between GPUs and deep neural networks. GPUs are what got deep neural networks to finally work.

From then on, we’ve been addicted to scale. For the last 15 years, all of my view of computer science as a researcher when I was at Google Brain and leading Cohere labs, has been ‘you build the biggest model possible. You throw as many GPUs as you can.’ Now, we’re at this inflection point as to what will happen next? My perspective is still somewhat controversial in many circles. But, scaling from my perspective, is actually going through a crisis because transformer architecture can’t be scaled anymore while getting gains. Scaling laws are broken now.

Q: So why are scaling laws failing now?

Story continues below this ad

Hooker: Because so much of what determines if you can scale or not is what we call the model type. Think of the AI model as a human born with some amount of intelligence because our genetics over thousands of years has given us that. Even when we’re a baby, we’re somewhat smart. That’s the model. Even when it’s not trained, it’s got the ability to be very powerful.

The truth is though that a new model is kind of like shifting the distribution of human intelligence up a bit each time. But even within that there’s a distribution. I would say, how you train the model, how the model explores the world, that’s more like our intelligence over our lifetime because it’s how we learn.

Q: Could you unpack the debate around scaling LLMs as a path to generalisation? Do you agree that reinforcement learning (RL) is a dead end? Is there any evidence to support it?

Hooker: The divide right now is there’s a group of people who say no it’s just the model, you just throw GPUs at it. Then, there’s another group of people who say no, the model is actually dead, we can’t get more out of the model. We need to think more about how the model interacts with the world and how the model learns.

Story continues below this ad

I don’t agree that RL is dead because test-time scaling, doing things which are about learning and interacting, which is similar to how humans get more intelligent over our lifetimes – that’s still quite promising. But just making the model bigger is done.

Q: In a recent podcast appearance, Anthropic’s Dario Amodei recently said that pre-training continues to lead to gains.

Hooker: I disagree with Dario on pre-training. I think his view is probably you still get a lot of return for pre-training but my point is I don’t think anyone is going to 4x the size of their model next year. So even if you’re still seeing some gains, it’s not like four years ago where you would quadruple or 10x the size of your model every year. So there we disagree.

Q: On RL, Dario also said that expanding to a broader mix of tasks such as math competitions, coding, etc, could improve the general intelligence of AI models.

Story continues below this ad

Hooker: I actually agree with him on RL, which is typically post-training. Data quality matters a lot. So I’m not against that view that there are some places where we still see a lot of return. But it’s not in training and that’s largely because the transformer architecture is dead.

What’s great about those RL techniques is that they are way cheaper than pre-training. It’s way less compute to do RL, post training, and test-time scaling, but the return for that compute is way higher, which means that it’s much more about who can innovate than it is who has the most GPUs. It makes the world fun again. It means that more innovation can come from more places.

Q: What is Adaption Labs about? How are the AI systems you are building architecturally different from LLMs?

Hooker: Most people intuitively understand that when the same LLM is shipped to billions of people, it fails them because it means that people essentially end up being prompt engineers. They end up doing acrobatics around the model to try and make it work for them, and that puts an enormous burden on the user and on entire countries to try and make it work for their context. It’s also a waste of compute to use the same massive model for easy questions and hard problems.

Story continues below this ad

Adaption Labs is about moving away from building the same model for everyone.

At Adaption Labs, we are building models that continue to learn from new data, and ensuring that we don’t spend the same amount of compute on every problem.

For us, RL and test-time scaling is still relevant because our focus is on real-time adaption. We want you to be able to see a real-time difference when you give feedback and change the behaviour of the model. We are also focusing on leveraging gradient-free techniques. So you don’t need to re-train the model, you just change the behaviour of the model by changing either the weights, without training, or change the decoding strategy.

Q: What is continual learning? How is it different from adaptive learning and in-context learning?

Hooker: Continual learning is a version of adaption. It typically means that over time, the model learns and continues to learn with new data. There’s also the question of, can the model just adapt to different types of data at this moment. I find both problems interesting and at Adaption Labs we’re working on both.

Story continues below this ad

The reason why continual learning is important is because when you build the next version of an AI model and ship it to everyone, you move on to developing a new model. So the previous version of the model continues to be stuck at that level of intelligence. Humans, in contrast, when we graduate from secondary school, we are not stuck at that level of intelligence for the rest of our lives. We continue to adapt as we go into jobs, and collaborate and learn from each other. Our goal is to build that same inherent human quality of adaptation into AI, and usher in a new era of intelligence.

But we still have to figure out how to do continual learning extremely efficiently. Right now, the way people are doing continual learning is just a version of re-training the model on more data which is a computational burden using the same techniques.

In-context learning is similar to prompt engineering. All you’re doing is stuffing a ton of history into the prompt, but it doesn’t change the model weights and the persistent model behaviour.

Q: If LLMs become less relevant, what would that mean for countries like India where big tech is ramping up data centre investments here?

Story continues below this ad

Hooker: Infrastructure is always very valuable. Investments in infrastructure typically signal a willingness to support an ecosystem and for me that’s quite powerful because it means there’ll be more technical ecosystems in different parts of the world that can shape AI.

What Adaption Labs is focused on, is making the individual cost of changing model behaviour more efficient. Even if that’s true, many people want to use AI and having infrastructure to support that demand is valuable.

Q: You said that GPUs work well with LLMs, but if we have an architecturally different AI model tomorrow, will we be able to retrofit these data centres?

Hooker: It’s a good question because it’s very hard to stray from deep neural networks. It is a risk because when we see the migration from Nvidia’s H100s to Blackwells, it’s generational and we’re constantly retrofitting data centres. But is it a risk that should deter you from building data centres? No. Getting it built in the first place is still significant.

Story continues below this ad

Q: How can India foster more AI research talent and establish globally competitive frontier AI labs here? Who should fund these labs?

Hooker: India needs to first create the space for it. Innovation typically happens when there’s a bit of breathing room, and when people have the time to think through different approaches. It is also important to take bets on more than one institution. Typically, funding should go to multiple different institutions because you never know which one’s going to lead to a culture of innovation

I would definitely recommend that there should be a distributed approach, different states should have different innovation centres, and there should be contrarian bets on the technology. Engineering tends to be about operationalisation and execution. Innovation tends to be about the unexpected and you can never predict where the unexpected happens.